Introduction

There are a lot of posts advising people to study this or that language. A relatively recent article on this topic I enjoyed was Michael O. Church’s Six languages to master. He picks Python, C, ML, Clojure, and Scala — and English. I’d like to offer a slight alternative. I fully agree on Python and C, but I differ in my recommendation for a functional language.

Based on my experience, recommend the following mix, if you want to maximise your time: Python, C, Haskell, and one language that is widely used in the industry to get your foot in the door. Your learning shouldn’t stop at that point, but thankfully one of the benefits of being versed in multiple paradigms is that you will find it easy to pick up a new language. Indeed, the benefits of Python, C, and Haskell are that they expose you to vastly different programming paradigms. Further, for all languages there are well-developed tools available, and they are surrounded by active communities.

A Multi-paradigm Language: Python

Many CS curricula start out with an introductory or remedial programming course in Java, and more recently there has been a push towards Python at some schools. Java is hopelessly bloated, even though there are attempts to modernise the language, for instance with the recent introduction of anonymous functions in Java 8. Still, the language is way too verbose, and virtually impossible to program in without an IDE. Further, it forces upon its users object-orientation — in my biased view a detour in the evolution of programming languages if not a cul-de-sac —, and does so in a rather awkward manner. There is too much work involved. While a good IDE can make writing Java code less tedious, the resulting code is nonetheless too cumbersome.

On the other hand, Python is a relatively elegant language that allows you to grow as a programmer. I would not recommend it for any serious work, due to its missing type system, but it’s fairly easy to get started with it. For a beginner it’s arguably more motivating to be able to execute a half-broken program than being told over and over by the compiler that her types don’t match. At first, you can write short sequential programs. Afterwards,, you can learn about object-orientation if you want to, and you’ll even find typical functional programming constructs in Python, such as higher-order functions or list comprehensions.

Even better, a simple code editor will perfectly suffice to get work done. It’s possible to do achieve quite a bit in an interactive environment in a browser. I’ve completed a number of MOOCs that were using Python as a teaching language, and it turned out that is feasible to write programs with a few hundred lines in an environment like CodeSkulptor. As a budding programmer you could do a lot worse for a first language.

Personally, I hope that Pyret, developed by the PLT group at Brown University, will gain some traction in computer science education, since it is a better design than Python overall, and even provides a path towards handling types with confidence.

A Systems Language: C

James Iry describes C as “a powerful gun that shoots both forward and backward simultaneously”. Indeed, C is the kind of language that should be written by machines, not humans. There are domain-specific languages that do exactly that by only allowing a subset of all possible C programs to be written, but for those certain guarantees can be made.

When I first worked with C I was quite taken aback by the lack of support from the compiler. There were plenty of instances where I wondered why there was no in-built check, for instance when trying to modify variables that were declared but not initialised. It can’t think of a case where anybody would want to do that. The common counter-argument is that C is intended for programmers who know what they are doing. The sad reality, though, is that the arrogance of C programmers, which you often encounter, is entirely misplaced. Sure, if you pay really close attention, your code will be error-free. However, it is very easy to make mistakes in C, and people are making a lot of mistakes. Alas, this is the root of null-pointer exceptions, segmentation faults, buffer overflow errors, or memory leaks. I don’t want to know what working in C was like before the advent of Valgrind.

Given this rather negative impression, why should you bother to learn C at all? For one, it will teach you about the relation between the code you write and the machine it is executed on, since your code will map rather closely to the underlying hardware. You can directly access memory locations, for instance, which is beneficial when programming embedded systems. Furthermore, there are virtues in the language itself, like its simplicity. The entire language is described in a short and well-written book that has been around for almost four decades now.

You might never have to write C code in your professional career. However, there are plenty of instances when it will come in handy, even if your application is written in a higher-level language. Quite a few languages allow you to call C through so-called foreign-function interfaces, and maybe implementing that one tight loop in C will give your program a competitive edge.

I’m not very familiar with development regarding languages on the systems level, but Mozilla’s Rust programming language might be a strong contender to replace C in many domains. That language is not yet production-ready, but it seems to be an enormous improvement over C. On the other hand, given how ubiquitous software written in C is, one might reasonably cast into doubt whether C will ever be replaced. It’s more likely that it will be relegated to an ever-shrinking niche, in addition to never-ending maintenance work on existing systems written in C, which won’t be replaced.

A Functional Language: Haskell

There are many functional programming languages you could chose to learn. I’m admittedly biased because I study in a department that has played a fundamental role in the development of Haskell. Consequently, I was able to do some university coursework in that language, instead of Java or C++, like it is common in so many other computer science departments. The first functional language I learnt was the Lisp-dialect Scheme, though, and I’m familiar with a few others.

Haskell has a very mature ecosystem, a fast compiler, powerful libraries. Further, there are excellent textbooks available, ranging from the beginner to the advanced professional level. Those are all ancillary factors, though, but with them you will have much less reason to snub your nose at the beautiful syntax of Haskell, it’s expressiveness, and the sheer elegance in its code that is achievable with some practice. But don’t worry, if you really want to, you can write some downright horrendous code in it, too. The static type system might infuriate you at first, though, but once you’ve gotten used to it, you may sorely miss it when working in a language without type inference.

Quite a few students who are exposed to Haskell at university avoid this language like the plague afterwards, but for others it becomes their go-to language. When I’m working on some code for myself, or as an exercise, I often write the first version in Haskell, due to the fact that it is so straightforward to map the program logic you want to express to actual code. This helps you to check your understanding of the problem, and solve conceptual issues.

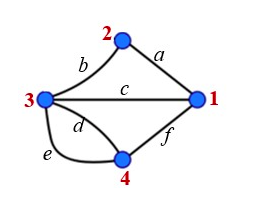

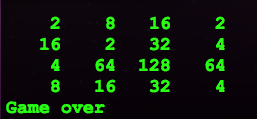

In CS there is the cliché that “X will make you a better programmer”. No matter what it is — arcane theory, technologies, languages — everything supposedly has the potential to turn you into a better programmer. I have my doubts about this claim in general, given that it is not at all uncommon that computer science students can barely program, thanks to mandatory group work and merely optional programming courses. Still, if you take Haskell seriously, it will expose you to a different model of programming. I’ve given some examples previously. For instance, in one post I highlighted the elegant syntax of Haskell, in another I described a very concise implementation of the game 2048. I hope this will make you at the very least curious about this language.

Another potential future benefit, which is the big hope of people wanting to see greater real world use of functional languages, is the fact that it is much easier to parallelise purely functional code. In some domains this is eminently useful. Lastly, unlike imperative languages, functional languages are all rather similar. If you know one, you can very easily pick up another one. The switch from Haskell to any other functional programming language will take much less time than switching from one imperative language to another, maybe with the exception of C# and Java.

But why not ML, Scala, or a Lisp?

Haskell is simply more advanced that Standard ML or OCaml, the only ML dialects that are widely used. Further, their respective communities are smaller. The IRC channel #haskell is generally very busy, while #ocaml sometimes feels like a ghost town. This might be an important aspect when learning a language. On the other hand, working with mutable state in OCaml is more straight-forward. Therefore, it might be easier to get used to OCaml, if you’ve never done any functional programming.

Scala is an immensely bloated language. My instinctive reaction to Scala was that something that ugly can’t have a clean implementation, and consequently I was not overly surprised when Paul Phillips, the main compiler writer on the Scala team, called it quits, and went on what seems like a retribution tour, spilling the beans on the nastiness hidden in the Scala compiler. It’s quite fascinating to watch his presentations. His talk We’re Doing it All Wrong will probably give you some good reasons to stay clear of Scala. I’ve learnt that the reality is even more unpalatable than I thought, given that the Scala team went out of their way to obfuscate (!) some of the complexities and poor design decisions.

Lisp is a nice language to explore, but maybe not as a first functional language. The syntax is somewhat awkward, and the missing type-inference can be frustrating. The neat thing about it is that when you’re writing Lisp code, you’re writing a parse tree, which leads to a possibly surprising amount of flexibility. I’d recommend Gregor Kiczales’ Coursera MOOC Introduciton to Systematic Program Design, which uses the Lisp dialect Racket. In some corners, the Lisp dialect Clojure is all the rage. If you have to target the JVM, then using that language is arguably quite tempting. Personally, I’d rather use Clojure than Scala.

A Practical Language that Pays the Bills

You got some experience in Python, C, and Haskell, but will this get you a job? While there are jobs available in which either of these languages is used, they will hardly maximise your chances of finding gainful employment. Python is a good language to know, but larger Python programs are rather fragile, due to their poor type system. C is common in embedded programming. This seems to be a good field to work in, but it’s a bit of a niche. It might require a much more specialised education, though, like a specialised MSc degree instead of a more open-ended degree in computer science or software engineering.

Compared to Haskell embedded programming is a huge niche. A very small number of companies is using Haskell, but normally only in small teams. Credit Suisse was a name that used to be mentioned all the time in functional programming circles when there was a need to prove the real-world relevance of Haskell, but, according to online sources, that team was disbanded. Standard Chartered employs some of the most well-known Haskell programmers, and there are some lesser-known companies using functional languages, but it’s not a lot, and many are highly specialised. A PhD almost seems like a prerequisite.

A more realistic option is therefore picking up one language and a relevant framework. With a solid grounding in languages from various paradigms, learning a new language is quite easy, as I wrote before. I’d recommend looking into your local job market, or the job market in cities you would like to work in, and tailor your education accordingly.

My impression is that Ruby on Rails is very popular, and there are countless jobs available. If you’ve been exposed to Java at university, then you could hope that your employer lets you use the more advanced features that were introduced in Java 8, which make this language more palatable. C# is often touted as a more pleasant alternative to Java, so that may be a direction to go into. Good knowledge of Java can also be leveraged for Android. Speaking of mobile applications, iOS development is still an exciting field. I had a look at ObjectiveC but wasn’t too pleased, but Apple is now fully committed to Swift, which might be an attractive alternative.

Knowing those three plus one languages, you’ll be far ahead of the typical graduate. Honestly, though, you’ll be way ahead of the curve if you can code up FizzBuzz, but that’s a topic for another day.